APEX in the AI era

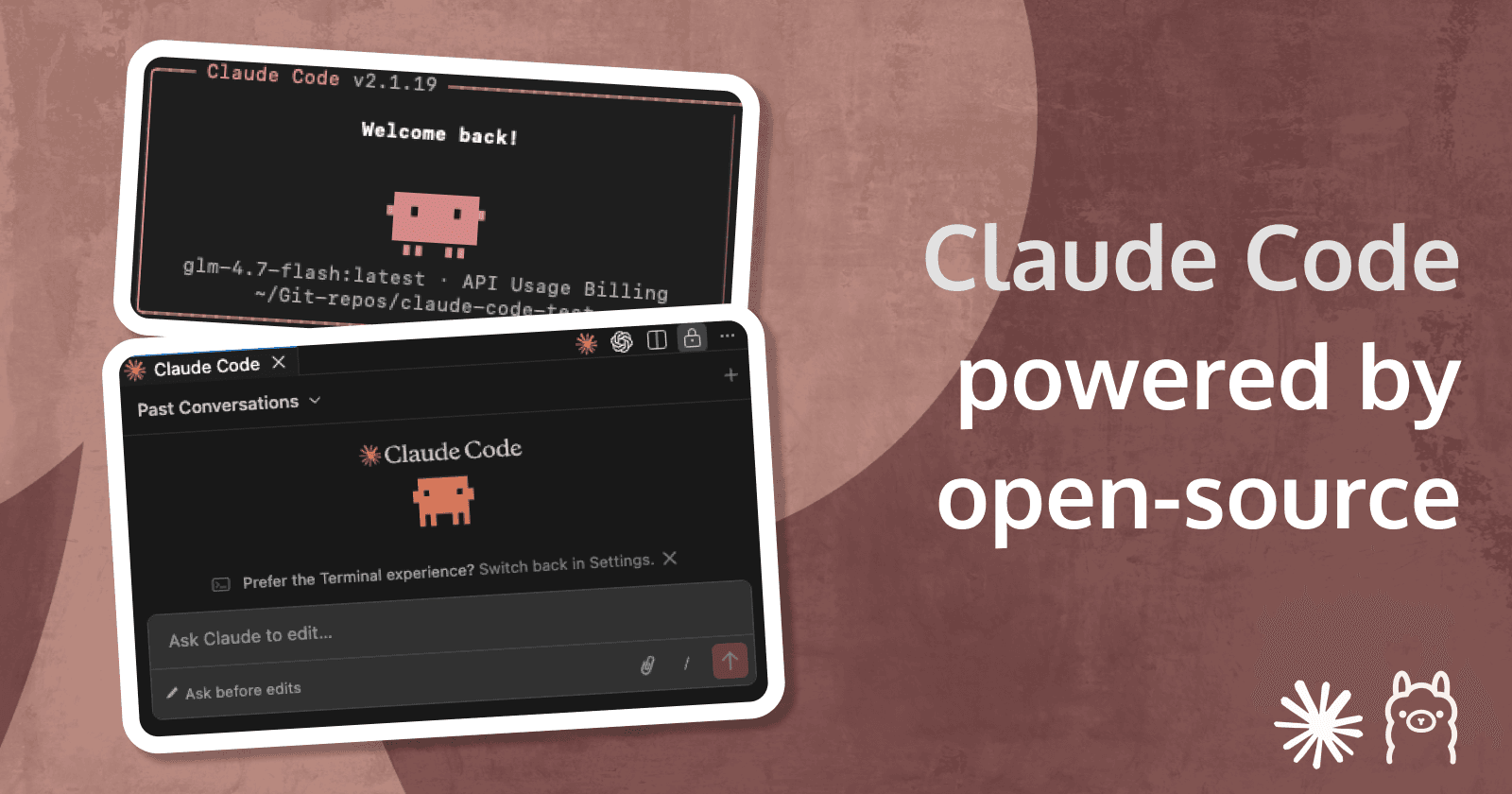

Using your local LLMs with the APEX Generative AI Services

Creating apps built with ❤️ using Oracle APEX. PL/SQL dev. Exploring the AI and ML world. Keen on front-end, visual arts and new technologies. Love travel, sports, photography.

What's new in APEX 24.1

This version of APEX is the first major one that brings AI services closer to developers. In the heart of them all are the Large language Models (LLM). The new Generative AI Services Workspace Utility allows developers to configure access to different LLM providers, which can then be used in various AI tools in APEX. Such features in APEX 24.1 include:

🔸 AI-Assisted App Development

🔸 AI-Assisted SQL Authoring

🔸 AI-Assisted Debugging

🔸 Create Apps using Natural Language

🔸 Conversational AI Dialogs

🔸 APEX_AI API

Supported LLM Providers

In this first AI release, three LLM providers are supported:

🔸 Open AI

🔸 OCI Generative AI Service

🔸 Cohere

💵 And while being very good general purpose models, there are some downsides, associated:

All of the three services are PAID. You need to have a paid account with any of them, to obtain an API Key and configure it in APEX using the

Generative AI ServicesWorkspace Utility.These models are general purpose models - they are not fine-tuned for specific tasks (like Code generation, Medicine or any other area). With OCI it is possible to fine-tune a LLama model, but it would never be as good as commercially supported 3rd party fine-tuned models for specific scenarios like coding or medicine

You need to be connected to the internet. The default 3 options only use online APIs to operate.

Solving the issues / Workarounds

🏆 The goal with this workaround is to:

Use a different LLM model of our choice (not one of the supported by default)

The model could be:

Open Sourceones (likeMistral,Zephyr,phi-3,LLama 3,Qwen,StarCoder,Code LLama, etc.) orClosed Sourceones (likeAnthropic Claude 3,Google's Gemini,Med-PaLM 2, etc).

If needed, the model could be run locally, even on your machine (if powerful enough). This will allow you to develop your APEX Application fully offline (including the use of LLMs and APEX AI services.

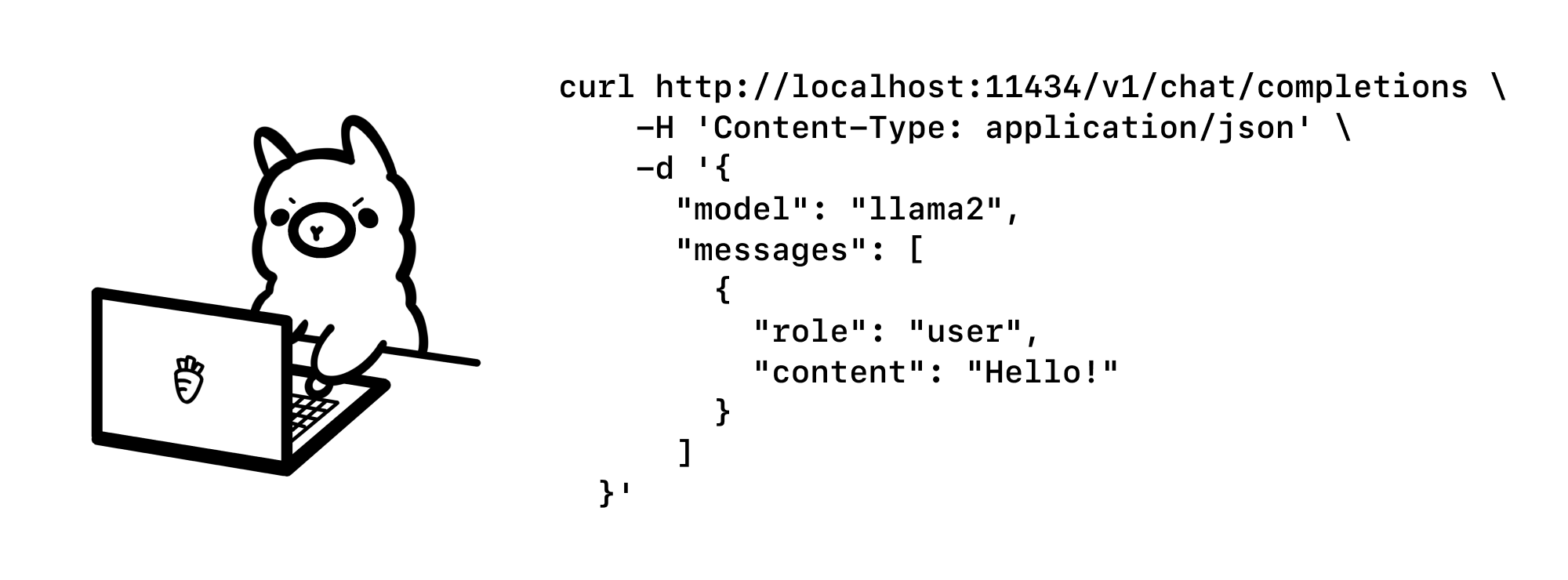

🍀 Luckily, Open AI's API has become a global standard, so many other LLM also provide their services using Open AI compatible format. Some of them support it out-of-the-box, others have wrappers on top of their original APIs that transform it into an Open AI compatible one. Here is a quick example of what it looks like:

curl http://my_llm_service.com/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "llama3",

"messages": [

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "Hello!"

}

]

}'

⚜️ At this point, if your model already supports the Open AI compatible format, and you don't need to run an LLM locally, and you are fine with the cost - great! Skip all the way down to the APEX Configuration steps. Otherwise, continue reading. 📄

🎁 Awesome, you have chosen Open source! It tackles the other pain point - cost. As mentioned above, there are some commercial services, which require subscription to the API in order to run it. However, there is a huge number of Open-source models, which are just great as performance and are free. If you like, you can host them at Hugging Face, Replicate, Fal, Oracle Cloud, AWS or some other GPU providing platform. In this case you will only pay for the GPU hosting.

Local machine setup

I am on a Mac, so I will share the steps for replicating my setup. You can also check the documentation for Windows steps:

Download and Install Ollama - https://ollama.com/

Go their Models Library and pick a model that you like - https://ollama.com/library

In my setup, I am using

OpenHermes 2.5, which is a 7B parameter model, a fine-tuned version of the Mistral 7B model - https://ollama.com/library/openhermes

You could as well use some other model, likecodellama, which is fine-tuned coding model.Ollamaallows you to store many different models on your machine and switch them. Note that the size of each model may vary, as usually they take around 1GB per each 1 billion parameters. So in the case of a 7B parameter model, it will roughly take 7GB of your disk space. Usually a parameter is 8 bits or 1 byte, but it may be more and less - that's not a topic for this blog post. Prepare for another 7GB taken out of your Macbook memory, as soon as theOllamais started (and model loaded into the memory).😅Open your Terminal and type the following to start

Ollamaand load the model:ollama run openhermes2.5-mistral:latest

You can run the some sample cURL command in Terminal to test the model:

curl -X POST http://localhost:11434/api/generate -d '{

"model": "openhermes2.5-mistral:latest",

"prompt": "The tallest building in the world is in ..."

}'

You can notice that the API URL here looks like http://localhost:11434/api/generate, while the Open AI compatible ones- http://my_llm_service.com/v1/chat/completions. What Ollama does for us is to add an Open AI compatible API on top of the default one, so we can use it instead. It's done automatically and does not require any additional effort. See the following blog post for more details:

At this point you can continue the setup in the new APEX 24.1 version. However, if your instance is outside of your local network, you should complete the next step first.

Exposing the local API to the internet

So right now we need to translate our http://127.0.0.1:11434/ to something public like http://public_url.com/ so we can access out LLM from everywhere.

For this task, I'm using Ngrok. It is a service that has a Free tier, so for basic usage no payment will be needed. You can head to their home page and create an account for free - https://ngrok.com/.

Once you have your account created, you will need an API key, so you can activate it on your machine. The setup is super simple, I won't go into details here (probably a topic for another blog post). Here is where you get the Auth token: https://dashboard.ngrok.com/get-started/your-authtoken

For setup instructions, follow the documentation:

https://ngrok.com/docs/getting-started/

In minimal case scenario, you will need 3 commands in this order:

brew install ngrok/ngrok/ngrok

ngrok config add-authtoken YOUR_AUTH_TOKEN

ngrok http http://127.0.0.1:11434

cd /Users/plamen/Dev/ngrok/ngrok.yml

ngrok start --config ngrok.yml --all

Here is an example of the ngrok.yml file:

version: 2

authtoken: abcggdfdeeooJnfdfDkjnfdsDADjdsada

tunnels:

llamacpp:

proto: http

addr: http://127.0.0.1:8080/

ollama:

proto: http

addr: http://127.0.0.1:11434/

host_header: 127.0.0.1:11434

flask:

proto: http

addr: http://127.0.0.1:5000/

You are now ready to go. After running the last command, you should have something similar (as the Forwarding address is listed in the terminal). You can also see the Forwarding address by going to http://127.0.0.1:4040/status :

🌐 Now instead of http://127.0.0.1/v1/chat/completions, you'd be able to access your LLM API from the internet using something like https://03de-2a01-...-27df.ngrok-free.app/v1/chat/completions.

Configuring the APEX Generative AI Services

At this point, we do have our own LLM, with an API endpoint, similar to the Open AI Completions API. This will allow us to use it in APEX (although our LLM is obviously not among the listed ones).

- Go to

Workspace Utilities/Generative AI. It is the place in the new APEX 24.1, where AI services like LLMs (credentials, URLs, models) are stored.

- Hit the

Createbutton in the top right. A new modal window will appear.

Fill in the information, depending on the model you are running and the URL that you have. In case you are using Ollama and Ngrok like me, it will be similar to this:

🔸AI Provider - Open AI - This is the option you need to select as we are going to use Open AI Compatible Chat/Completions API

🔸Name - Here you pick a name for your configuration. It will later be used in the various APEX components, so you give it a meaningful name.

🔸Static ID - Similar to the name, enter a meaningful identifier

🔸Used by App Builder - ✅ - Enable this feature if you want this model to be used in the APEX Builder for the AI services like AI-Assisted App Development, AI-Assisted SQL Authoring, AI-Assisted Debugging or Create Apps using Natural Language. Once you select this feature, a new icon will appear in multiple places where AI can be used in the Builder.

🔸Base URL - https://03de-...-27df.ngrok-free.app/v1 - The base URL of the Generative AI Service. In my case, it is the Ngrok tunnel address, followed by /v1 . This is very important step - you don't need to enter the full path like http://my_llm_service.com/v1/chat/completions, APEX automatically adds the last part (/chat/completions), depending if you use the Chat Assistant region or some of the other Generative AI features in the Builder. You can see this in the HTTP Requests in the Browser Dev Tools, in the Javascript console and in the APEX Debug, if you enable debugging of your session.

🔸Credential - If not already created through Web Credentials, you will need to enter an API Key (if such is required for your service). In my case, I have not set any API Keys in order to use my local LLM, so I will just add some random string (out of which APEX will automatically generate a new Web Credentials record - you can later see it listed in the Web Credentials list).

🔸AI Model - enter the name of your model. It is the same as what you have started in Ollama. In my case - openhermes2.5-mistral:latest, but yours might be starcoder, mistral or any other that you have chosen.

🔸HTTP Headers - In my case, I leave it blank, as I don't have any additional headers needed. Some services require additional HTTP headers for making the REST requests. The Anthropic (Claude 3) for example requires HTTP Headers like these:Content-Type\=application/jsonanthropic-version\=2023-06-01

We are now ready to make use of our freshly configured AI Service. Go to any page of your choice and create a button or any other element that will trigger opening of the

AI Chatbot Assistant.Create a new

Dynamic Actionon the button - in my case - onButton Click. Then select aTrueaction - here comes the new feature in APEX - a newAction, called Open AI Assistant - pick this one.

Having picked it, you can configure how your Chatbot will behave - you need to select the AI Service that we already configured in step 2, Pich a nice

System Prompt, optionally aWelcome Message,Titleand so on. You can also predefine some Messages (similar to the Suggestion Chips in the Faceted Searches). You can also select anInitial Prompt, a Page Item to be used in the conversation and so on - more on these details in another post (or the Oracle APEX documentation - ). Click Save, you are done - you have an AI Assistant in your APEX page, using a custom LLM, hosted on your local machine for FREE! 🎉