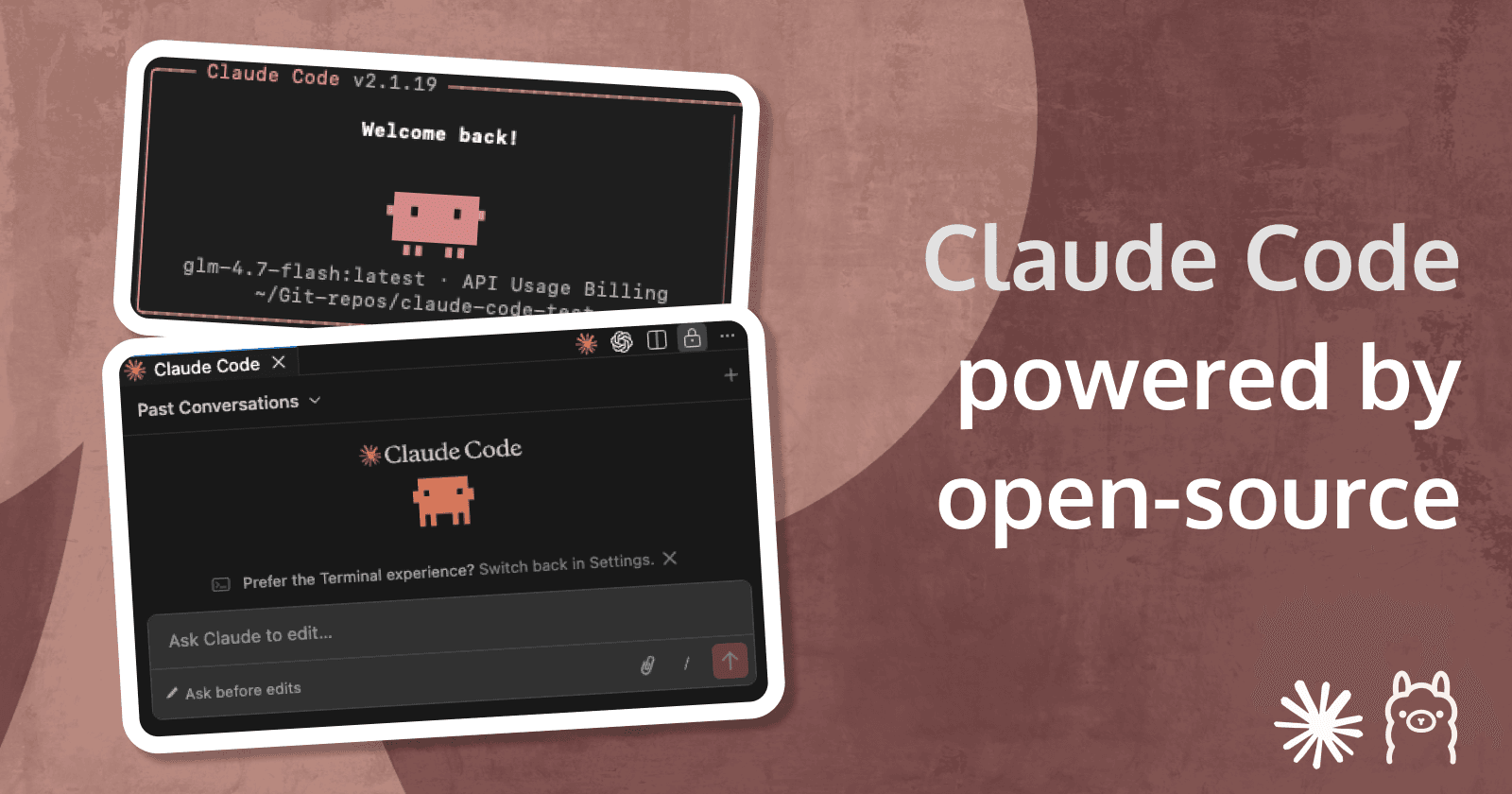

Running Claude Code with open-source models locally

The power of Claude Code - offline, free, secure

Creating apps built with ❤️ using Oracle APEX. PL/SQL dev. Exploring the AI and ML world. Keen on front-end, visual arts and new technologies. Love travel, sports, photography.

Before we start

Before diving into the topic - a personal note from me. This blog post is not discussing the effects of AI on the developer’s world as we knew it. It is not discussing the quality of the code, generated by coding agents. It is not a recommendation to use or not any of the tools mentioned. These are topics that can be discussed forever and everyone is free to experiment and decide for themselves which one to use, to use any tool at all and so on.

What I know though is that AI coding agents, AI in general is only going to get better and is here to stay. The pace at which this technology is developing is so rapid, that we often can’t even remember the names of models, tools and protocols that get released day after day. And the notes that I’m putting here are first of all a way for me to learn and second - a way to hopefully help someone else too.

What is Claude Code (and all similar tools)

It sounds a bit strange to write about it as if it was here for a long time. The truth is the Claude Code and the other coding agent tools are around for less than a year now. But have become part of the daily life for so many developers that it feels like we’ve had them for years. Not only that, but the hype, publicity and massive amount of discussions on social media have left almost no developer indifferent to it. Around the same time the term “Vibe coding” become extremely popular and even one of the word of the year. Essentially shifting the developer focus from writing code to explaining what the code should do, overseeing the generated code and navigating the coding agents what their next steps should be. Which of course has pros and cons, which can trigger a whole other topic. But what does Claude Code exactly do and how is it different to the AI coding practices people had for the past couple of years?

Instead of copying code back and forth between a chat interface (usually a web app like ChatGPT, Anthropic’s console, etc.) and your coding tools like VSCode, Claude Code acts as an autonomous coding agent that can read your files and repositories, understand the context, make changes across multiple files, and even execute tests and verify if the generated code works.

Claude Code runs in the Terminal, in your IDE (like VSCode), in the Web and iOS and even in Slack. As an Anthropic product, it naturally works using Anthropic’s LLMs - Sonnet, Opus, etc. Pricing starts $20 per month (or $200 for a yearly subscription), ideal for small codebases all the way to a $100 or $200 per month for the Max plans, giving access to the latest and greatest coding models, more usage and support for larger codebases.

And here comes the best part - you can install it and thanks to Ollama’s compatibility with Anthropic’s Messages API - it is now possible to use it with open-source models like GPT-OSS, GLM-4.7, QWEN3-Coder and so on.

Claude Code installation

These are the steps for me on a Macbook. For other OS, follow the Claude Code Documentation.

The below installation is the native one (Homebrew is also an option). Using the Native one though, it automatically updates in the background to keep you on the latest version.

- Run the following command in your Terminal:

curl -fsSL https://claude.ai/install.sh | bash

- Verify if everything is fine, by running:

claude --help

If everything is fine, you’ll get the list of supported options. In my case, there was an issue with my PATH, so I had to run additionally this command:

echo 'export PATH="$HOME/.local/bin:$PATH"' >> ~/.bashrc && source ~/.bashrc

Ollama setup

- First step is to configure Claude Code’s environment variables to use Ollama. This will make Claude Code use Ollama, instead of it’s default models and will not require you to login with your Anthropic account.

export ANTHROPIC_AUTH_TOKEN=ollama

export ANTHROPIC_API_KEY=""

export ANTHROPIC_BASE_URL=http://localhost:11434

Verify if the environment variables are correctly changed by running the following:

# Either print the values for each

echo $ANTHROPIC_AUTH_TOKEN

echo $ANTHROPIC_BASE_URL

# or print both

printenv | grep ANTHROPIC

- Next step is to download and run an open-source coding model using Ollama. The one that’s getting a lot of attention lately is GLM-4.7, so I will go with it. Consider the size of model and your hardware. In this case the model of choice is around 19GB.

ollama run glm-4.7-flash

Claude Code in action

After installation is done and open-source models have been selected via Ollama, we are ready to give Claude Code a try. To test our setup, we could create a test repository and see Claude Code in action.

# Create a new directory for your project

mkdir claude-code-test

cd claude-code-test

# Initialize the Git repository

git init

# (Optional) Create an initial file so the repo isn't empty

echo "# My Claude Code local test project" > README.md

git add README.md

git commit -m "initial commit"

Run Claude Code with the model you like

# You have two options to start it

# 1. Run Claude Code using claude

claude --model glm-4.7-flash:latest

# or

# 2. Launch Claude Code from Ollama

# This option will ask you to chose the Ollama model to use

ollama launch claude

# This option will directly run Claude Code with the Ollama model specified

ollama launch claude --model glm-4.7-flash:latest

claude-code-test, using glm-4.7-flash model through Ollama.

Claude Code Extension for VSCode

In addition to the CLI, Claude Code also supports the option to be used via VSCode. First step is to download the VSCode Extension. You can find it here or using the Search inside the app.

https://marketplace.visualstudio.com/items?itemName=anthropic.claude-code

After you install it, you need to configure the Environment Variables, similar to the CLI. To do that, go to the extension Settings and find the section called Claude Code: Environment Variables. There you will find a link - Edit in settings.json.

Click on it, find claudeCode.environmentVariables and replace it, so it looks like this:

"claudeCode.preferredLocation": "panel",

"claudeCode.environmentVariables": [

{

"name": "ANTHROPIC_AUTH_TOKEN",

"value": "ollama"

},

{

"name": "ANTHROPIC_API_KEY",

"value": "ollama"

},

{

"name": "ANTHROPIC_BASE_URL",

"value": "http://localhost:11434"

}

]

After saving the file, you will now be able to use Claude Code in VSCode using your local Ollama models, without being asked for any Anthropic API keys.

More about Claude Code

If you want to dive deeper into Claude Code, you can explore all the options it support and go through the documentation on their website.